Training-Free Bayesianization for Low-Rank Adapters of Large Language Models

December 10, 2024· ,,,·

0 min read

,,,·

0 min read

Haizhou Shi

Equal contribution

Yibin Wang

Equal contribution

,Ligong Han

Dimitris Metaxas

Huan Zhang

Hao Wang

Abstract

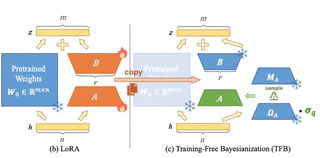

Estimating the uncertainty of responses of Large Language Models~(LLMs) remains a critical challenge. While recent Bayesian methods have demonstrated effectiveness in quantifying uncertainty through low-rank weight updates, they typically require complex fine-tuning or post-training procedures. In this paper, we propose Training-Free Bayesianization~(TFB), a novel framework that transforms existing off-the-shelf trained LoRA adapters into Bayesian ones without additional training. TFB systematically searches for the maximally acceptable level of variance in the weight posterior, constrained within a family of low-rank isotropic Gaussian distributions. We theoretically demonstrate that under mild conditions, this search process is equivalent to variational inference for the weights. Through comprehensive experiments, we show that TFB achieves superior uncertainty estimation and generalization compared to existing methods while eliminating the need for complex training procedures. Code will be available at this https URL.

Type

Publication

Advances in Neural Information Processing Systems (NeurIPS), 2025

Authors

Yibin Wang

(he/him)

Incoming Ph.D. student

I am an incoming Ph.D. student in the Computer Science Department at Rutgers University. I received my Bachelor’s degree at Huazhong University of Science and Technology in 2024. I was under the guidance of Prof. Kun He @ HUST, Prof. Hao Wang @ Rutgers and Prof. Huan Zhang @ UIUC.

From such a gentle thing, from such a fountain of all delight, my every pain is born.

—— Michelangelo